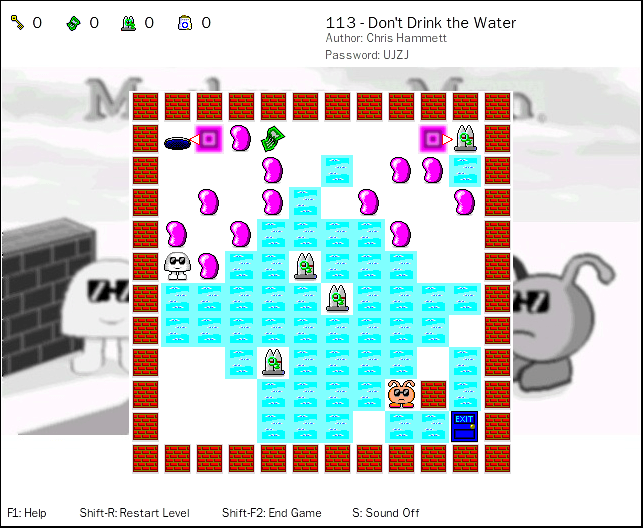

Here’s something that might sound strange. Today, while playing the retro puzzle game “Mushroom Man” — a great throwback to “Chip’s Challenge” — I noticed how similar one of the levels was to solving a programming contest question. In level 113 “Don’t Drink the Water,” there is a large number of water tiles and only a limited amount of oxygen available to cross those tiles. At one point, I found myself on a spot where all paths left me exactly 1 oxygen short. Eventually, I needed to work backwards, counting the tiles to look for a solution. The shape of the level — a 10×10 grid with the goal in the corner — reminded me a lot of a dynamic programming solution where you would fill in a 2D integer array.

Dynamic Programming?

The origin of the term dynamic programming has very little to do with writing code. It was first coined by Richard Bellman in the 1950s, a time when computer programming was an esoteric activity practiced by so few people as to not even merit a name. Back then programming meant “planning,” and “dynamic programming” was conceived to optimally plan multistage processes.

– https://www.cs.berkeley.edu/~vazirani/algorithms/chap6.pdf

Dynamic programming is about breaking a large problem into sub-problems, and using those sub-solutions to find the large solution. While you could write a DP solution recursively, it’s usually much more efficient to start with the smallest problem, and iteratively build up to the large one. A lot of the classic examples, such as the longest subsequence problem, can be solved by building a 2-dimensional table. For that reason, I like to think of DP algorithms as table-building.

Level 113 of Mushroom Man. Each oxygen tank lets you cross 3 water tiles.

This level is a great candidate for table-building because it has practically no obstacles and the few that exist can be ignored (the pink jellybeans can be pushed aside, and the purple teleporters only serve to force you along a certain path at the very start). It also doesn’t feature a lot of different items or dependencies, so it doesn’t require any backtracking (e.g. to find the best order to acquire items). Overall, it’s one of the easier levels, but it does have one devious trick.

Building Up and Left: A Naïve Approach

The concept is simple. We will count the minimum number of water tiles needed to reach the exit. The exit is in the bottom-right, so we’ll start there with 0, and then continue filling one diagonal at a time. In the following grid, light blue/cyan squares are water, white is land, and black/red are impassable walls.

| . | . | . | . | . | . | ||||

| . | |||||||||

| . | |||||||||

| . | |||||||||

| . | |||||||||

| . | |||||||||

| 2 | |||||||||

| 2 | 2 | ||||||||

| 2 | 1 | ||||||||

| 2 | 2 | 1 | 0 |

A water tile increases the count by 1, a land tile does not. You can interpret the number in each square as meaning how many water tiles you need to cross to reach the exit from that square, including itself.

| . | . | . | |||||||

| . | |||||||||

| . | |||||||||

| 3 | |||||||||

| 3 | 3 | ||||||||

| 5 | 4 | 3 | |||||||

| 5 | 4 | 3 | 2 | ||||||

| 5 | 4 | 3 | 2 | 2 | |||||

| 5 | 4 | 3 | 2 | 1 | |||||

| 5 | 4 | 3 | 2 | 2 | 1 | 0 |

As we work outwards, we’ll start to encounter squares with multiple options. A simple formula (which I’ll call the naïve approach) is to look at the square directly below and directly to the right, then take the lesser of the two values and add 1 (for water tile) or 0 (for land tile). For example, the “3” highlighted in red comes from the smaller of the land square to the right (cost of 3) and the water tile below (cost of 4), plus 0.

For programming, a simple rule like this is great, because all you need to do is write a single nested loop to fill in the whole grid:

grid[1][1] = 0

for i in (1..n)

for j in (1..m)

// use a large value when i-1 or j-1 is out of range (edges)

below = (i > 1) ? grid[i-1][j] : 9999

right = (j > 1) ? grid[i][j-1] : 9999

if (below < right)

best = below

else

best = right

grid[i][j] = best + (isWater ? 1 : 0)

(Side-note: this pseudo-code fills in the grid one row at a time, rather than one diagonal at a time, however both give the same result. The proof for that is left as an exercise for the reader.)

The completed grid using this formula looks like this:

| 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | 4 | |

| 4 | 4 | 4 | 4 | 4 | 4 | 3 | 3 | 3 | 4 |

| 4 | 4 | 4 | 4 | 4 | 3 | 3 | 3 | 3 | 3 |

| 7 | 7 | 7 | 7 | 6 | 5 | 4 | 3 | 3 | 3 |

| 8 | 8 | 8 | 7 | 6 | 6 | 5 | 4 | 3 | 3 |

| 7 | 7 | 8 | 8 | 7 | 6 | 6 | 5 | 4 | 3 |

| 6 | 6 | 7 | 7 | 7 | 6 | 5 | 4 | 3 | 2 |

| 5 | 5 | 6 | 6 | 6 | 5 | 4 | 3 | 2 | 2 |

| 5 | 5 | 5 | 6 | 5 | 4 | 3 | 2 | 1 | |

| 5 | 5 | 5 | 5 | 4 | 3 | 2 | 2 | 1 | 0 |

Now if we wanted the path with the fewest water tiles from the top-left corner to the bottom-right corner, we would start at the top-left and move either down or right, to whichever square has the lower value. This gives us a path travelling right all the way to the 2nd last tile, then 2 down, then 1 right, and then straight down to the exit.

Moving in four directions: Breadth-First Search

You may notice that there is something odd about the grid above. The connected land tiles near the top should all be able to reach the exit in 3 water tiles, but the grid shows a range of values from 3 to 8. That’s because our naïve approach assumed that we can only move in 2 directions: down and right. In the actual game, you can move in 4 directions: up, down, left, and right!

So how do we account for the fact that we can move in all 4 directions? Clearly, filling in one row (or one diagonal) at a time won’t work. Instead, we must fill in the grid starting with the neighbours of the smallest values. In other words, we need a breadth-first search.

| . | . | . | . | . | |||||

| . | |||||||||

| . | |||||||||

| . | |||||||||

| . | |||||||||

| 3 | |||||||||

| 3 | 2 | ||||||||

| 3 | 2 | 2 | |||||||

| 3 | 2 | 1 | |||||||

| 3 | 2 | 2 | 1 | 0 |

It starts pretty similarly. Above, we’ve filled in all the neighbours of all value “2” and below squares.

| 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | |

| 3 | 3 | 3 | 3 | 3 | 4 | 3 | 3 | 3 | 4 |

| 3 | 3 | 3 | 3 | 4 | 3 | 3 | 3 | 3 | 3 |

| 3 | 3 | 3 | 4 | 4 | 4 | 3 | 3 | 3 | |

| 3 | 3 | 4 | 4 | 3 | 3 | ||||

| 4 | 4 | 4 | 3 | ||||||

| 4 | 3 | 2 | |||||||

| 4 | 3 | 2 | 2 | ||||||

| 4 | 3 | 2 | 1 | ||||||

| 4 | 3 | 2 | 2 | 1 | 0 |

Next, we fill in all the neighbours of every “3”. Many of those neighbours become a “3” too, so we keep going until there are none left.

| 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | |

| 3 | 3 | 3 | 3 | 3 | 4 | 3 | 3 | 3 | 4 |

| 3 | 3 | 3 | 3 | 4 | 3 | 3 | 3 | 3 | 3 |

| 3 | 3 | 3 | 4 | 5 | 4 | 4 | 3 | 3 | 3 |

| 3 | 3 | 4 | 5 | 5 | 5 | 4 | 3 | 3 | |

| 4 | 4 | 5 | 5 | 4 | 3 | ||||

| 5 | 5 | 5 | 4 | 3 | 2 | ||||

| 5 | 4 | 3 | 2 | 2 | |||||

| 5 | 4 | 3 | 2 | 1 | |||||

| 5 | 4 | 3 | 2 | 2 | 1 | 0 |

Above, we’ve filled in all the neighbours of every 4.

| 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | |

| 3 | 3 | 3 | 3 | 3 | 4 | 3 | 3 | 3 | 4 |

| 3 | 3 | 3 | 3 | 4 | 3 | 3 | 3 | 3 | 3 |

| 3 | 3 | 3 | 4 | 5 | 4 | 4 | 3 | 3 | 3 |

| 3 | 3 | 4 | 5 | 5 | 5 | 5 | 4 | 3 | 3 |

| 4 | 4 | 5 | 6 | 6 | 5 | 6 | 5 | 4 | 3 |

| 5 | 5 | 6 | 7 | 7 | 6 | 5 | 4 | 3 | 2 |

| 5 | 5 | 6 | 6 | 6 | 5 | 4 | 3 | 2 | 2 |

| 5 | 5 | 5 | 6 | 5 | 4 | 3 | 2 | 1 | |

| 5 | 5 | 5 | 5 | 4 | 3 | 2 | 2 | 1 | 0 |

Above is the completed BFS grid. Compared to the naïve grid, some of the squares now have smaller values and the largest numbers are concentrated in the centre instead of on the left. Intuitively, this is because it takes more steps to get out of the middle of the lake. In particular, the three islands in the centre now have values of (5, 5, 6) versus (6, 6, 6) before.

To write a BFS (breadth-first search) in code, the key is to keep a queue of squares to visit next, ordered by the lowest value first. This is also known as a priority queue; virtually every classic shortest-path algorithm will use a priority queue of some sort. The pseudo-code might look something like this:

grid[1][1] = 0

node = new Node(1, 1, 0) // row 1, col 1, value 0

queue.add(node)

while queue not empty:

current = queue.next()

for node in current.neighbours()

if grid[node.row][node.col] is not set

node.value = current.value + (node.isWater() ? 1 : 0)

grid[node.row][node.col] = node.value

queue.add(node)

This code requires a bit more thought, as well as a longer theoretical runtime. We still fill in every square exactly once, so it’s guaranteed to finish. However, the main increase in complexity comes from the priority queue. In the worst case, all n nodes could be enqueued at once. Depending on the implementation chosen, priority queues generally take up to O(log n) for each insert/remove, for a total runtime of O(n log n) versus just O(n) in the naïve algorithm (where n is the number of squares on the board). In practice, this is still very fast, and certainly will finish before any brute-force or backtracking algorithm will. Most importantly, it gives the correct solution!

Back to the Game

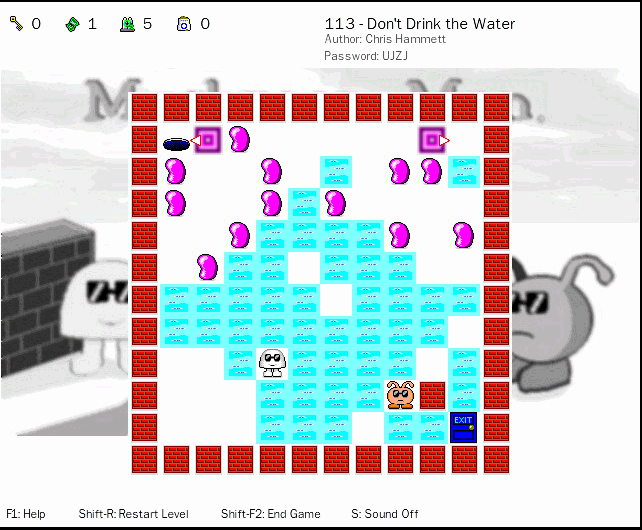

So does the BFS grid help us solve the level? Yes it does! The following screenshot shows the island that I described being stuck on in the first paragraph. The BFS confirms that I would need to cross 6 water tiles to reach the exit, but I only had 5 air. However, there are two other islands that only require 5 water tiles to exit. Of those two, one of them can be reached with 5 air remaining.

Reaching the exit from this point is impossible. At least 6 water tiles need to be crossed, but we only have 5 oxygen.

The following is a quick look at the optimal path to reach all 4 oxygen tanks, starting with the top-right. The numbers represent the air remaining. The yellow squares are the oxygen tank locations (each tank gives +3 air).

| < | . | 2 | 2 | 2 | > | 3 | |||

| 2 | 2 | 2 | |||||||

| 1 | 2 | 2 | 2 | 2 | |||||

| 0 | |||||||||

| 3 | 2 | ||||||||

| 5 | |||||||||

| 4 | |||||||||

| 5 | 2 | 3 | |||||||

| . | |||||||||

| E |

The winning strategy is then to reach the middle tank (with 5 air) and then take the shortest path to the exit (also 5 water tiles, following the adjacent water tiles numbered 5-4-3-2-1 in the BFS grid, i.e. up and to the right). The solution skips the bottom-left tank, which we just proved to be a devious trap!

0 Comments.